Papers

papers/uhlm-2412-12687

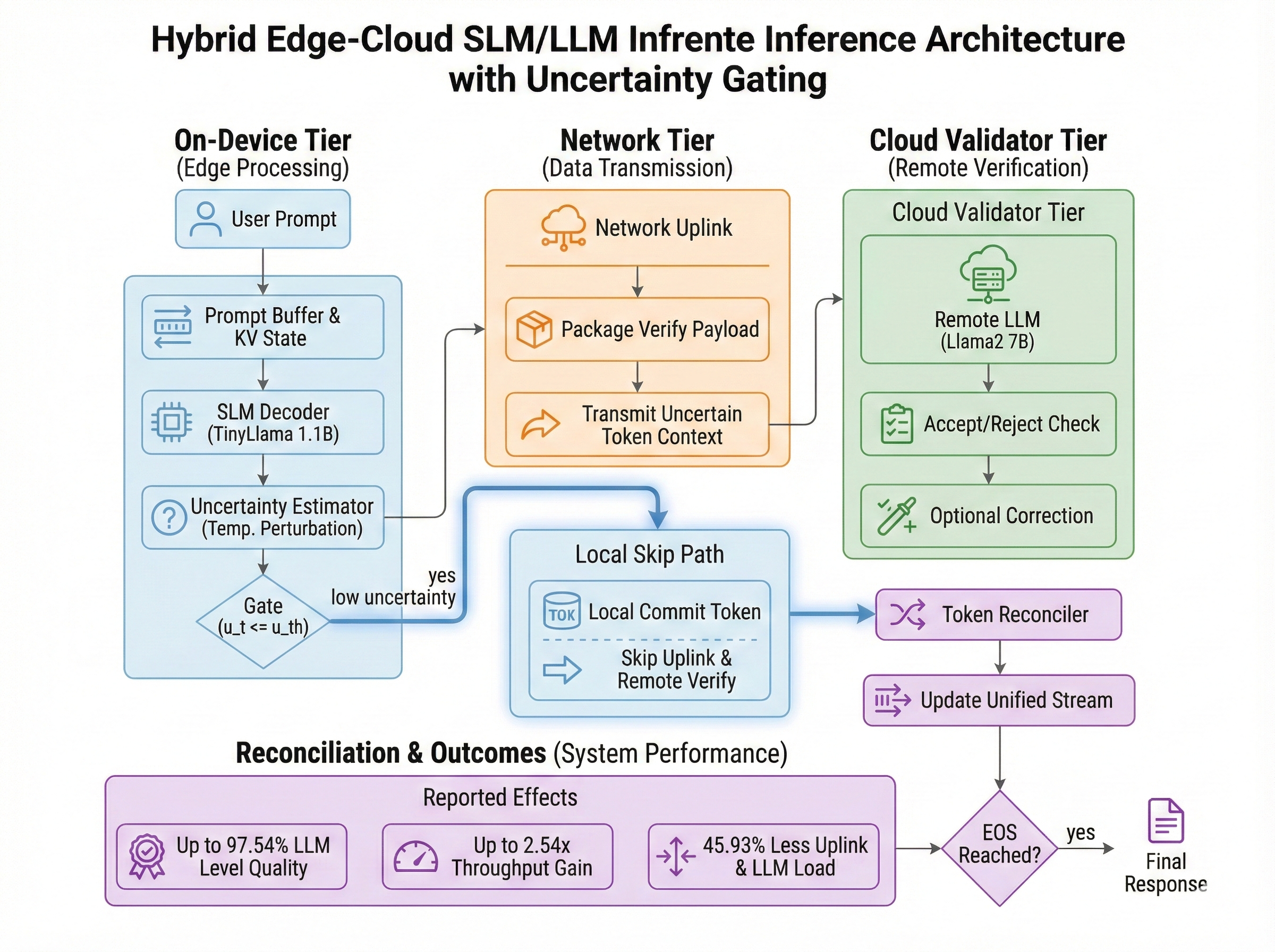

Uncertainty-Aware Hybrid Inference with On-Device Small and Remote Large Language Models

U-HLM lets the on-device SLM opportunistically skip uplink transmission and server-side LLM verification for low-uncertainty tokens.

Source: https://arxiv.org/pdf/2412.12687.pdf

TL;DR

- U-HLM lets the on-device SLM opportunistically skip uplink transmission and server-side LLM verification for low-uncertainty tokens.

- The core empirical finding is a linear relationship between SLM uncertainty (via temperature perturbation) and LLM rejection probability.

- The paper derives an uncertainty threshold and an upper bound on expected rejection risk, giving a quantitative speed-accuracy control knob.

Problem (low token throughput) -> uncertainty-guided skipping -> threshold/risk theory -> faster inference with near-LLM accuracy.

Refined Architecture

Key Takeaways

- U-HLM lets the on-device SLM opportunistically skip uplink transmission and server-side LLM verification for low-uncertainty tokens.

- The core empirical finding is a linear relationship between SLM uncertainty (via temperature perturbation) and LLM rejection probability.

- The paper derives an uncertainty threshold and an upper bound on expected rejection risk, giving a quantitative speed-accuracy control knob.

- In experiments, U-HLM cuts uplink transmissions and LLM computations by 45.93% versus HLM without skipping.

- U-HLM reaches up to 97.54% of LLM inference accuracy while achieving up to 2.54x higher token throughput than HLM without skipping.

Motivation / Contribution

Motivation

- Baseline HLM preserves distributional alignment with LLM inference but suffers low throughput because each token may require large-vocabulary uplink transfer plus dual-model computation.

- Under weak wireless conditions, uplink latency becomes a major bottleneck and directly hurts user-perceived responsiveness.

- The goal is to keep LLM-level quality as much as possible while reducing communication and per-token latency.

Contribution

- Proposes U-HLM, an uncertainty-aware opportunistic hybrid inference pipeline that skips uplink/LLM paths when uncertainty is below threshold.

- Validates a practical uncertainty signal using temperature perturbation, and models rejection probability as a linear function of uncertainty.

- Distinguishes risk-averse and risk-prone skipping regimes, then provides threshold design and expected rejection-risk analysis (Theorem 1).

- Demonstrates effectiveness with TinyLlama-1.1B (SLM) + Llama2-7B (LLM) on Alpaca and FLAN-style datasets.

Detailed Notes

1) Problem Setting and Baseline HLM

- The system has one device (SLM) and one base-station server (LLM), using speculative inference for accept/reject and optional resampling.

- HLM can reproduce LLM-style token distribution, but throughput is constrained by per-token uplink payload and combined SLM+LLM compute cost.

- The paper addresses this by skipping server verification for tokens predicted to be accepted.

2) Core Idea: Uncertainty-Guided Skipping

- At each round, the SLM generates a draft token and computes uncertainty from temperature-perturbed samples.

- If

u(t) <= u_th, U-HLM skips uplink and LLM verification; otherwise it follows standard HLM verification. - A key practical point is that temperature perturbation can be run in parallel with SLM forward computation.

3) Theory: Threshold Design and Risk

- Risk-averse skipping targets tokens predicted to be immediately accepted, preserving distributional consistency more conservatively.

- Risk-prone skipping also skips probabilistically accepted tokens, improving throughput but introducing potential accuracy loss.

- Under i.i.d. assumptions, the paper derives threshold design and an upper bound for expected rejection risk using uncertainty density.

4) Experimental Setup

- Models: TinyLlama-1.1B (SLM), Llama2-7B (LLM)

- Data: 100 random Alpaca prompts, plus QED/CREAK/StrategyQA from FLAN collection

- Metrics: cosine similarity (accuracy), token throughput, transmission rate (TR), true skip rate (TSR)

- Fixed channel/system parameters are used to compare behavior across SNRs.

5) Main Results

- U-HLM outperforms SLM and random-skipping Rand-HLM in cosine similarity across datasets.

- Reported inference quality reaches up to 97.54% of LLM and about 100.05% of HLM (average, cosine similarity basis).

- U-HLM achieves up to 2.54x higher token throughput than HLM without skipping.

- It also reduces uplink transmissions and LLM computations by 45.93%.

6) Interpretation and Limits

- The main strength is turning uncertainty-to-rejection correlation into a systems control variable for communication/computation gating.

- The paper notes that token-sequence synchronization overhead between SLM and LLM can exist when skipping, but is simplified in analysis.

- The study focuses on a single device-server setup; multi-user scheduling/serving extensions remain open.

7) Practical Implication

- For edge-cloud LLM serving, selective verification based on on-device uncertainty can deliver substantial latency gains with limited quality loss.

- The method is especially relevant to mobile assistants and on-device copilots operating under wireless and compute constraints.

References

Linked Mentions

No linked mentions yet.